Difference between revisions of "Architecture"

(spelling) | m (→Features: Removed redundant 'and' (i.e. 'and and' -> 'and')) | ||

| (5 intermediate revisions by 2 users not shown) | |||

| Line 3: | Line 3: | ||

The Mill architecture is a general purpose processor architecture paradigm in the sense that [http://en.wikipedia.org/wiki/Stack_machine stack machine] or a [http://en.wikipedia.org/wiki/RISC_processor RISC processor] is a processor architecture paradigm. | The Mill architecture is a general purpose processor architecture paradigm in the sense that [http://en.wikipedia.org/wiki/Stack_machine stack machine] or a [http://en.wikipedia.org/wiki/RISC_processor RISC processor] is a processor architecture paradigm. | ||

| − | It is also a processor family architecture in the sense that [http://en.wikipedia.org/wiki/X86 x86] or [http://en.wikipedia.org/wiki/ARM_architecture ARM] are processor family architectures. | + | It is also a processor family architecture in the sense that [http://en.wikipedia.org/wiki/X86 x86] or [http://en.wikipedia.org/wiki/ARM_architecture ARM] are processor family architectures. Any specific Mill processor can be configured and optimized to a wide variety of different tasks and use profiles. |

| − | + | === Features === | |

| − | This approach traditionally has problems dealing with common general purpose workload operations and flows like branches, particularly [[Pipelining#While_Loops|while-loop execution]], as well as with hiding [[Memory]] access latency. Those problems have been addressed | + | One guiding principle in the design was to look out for edge areas where current CPU designs struggle and specifially find solutions for those areas. |

| + | |||

| + | ;Memory Bandwidth : Innovations like [[Backless Memory]] and [[Merging Caches]] dramatically cut down memory usage in typical work loads. | ||

| + | ;Computing Throughput : The massive parallelism enabled by [[Static Scheduling]] and the flexible wide issue [[Encoding]] pushes <abbr title="Multiple Instructions, Multiple Data">MIMD</abbr> workloads beyond anything possible previously. | ||

| + | ;Security : Rethinking the protection primitives to enable fast [[Context Switches]], make true micro kernels viable for example, and hiding security sensitive control data from program access makes many common exploits physically impossible on the Mill architecture. | ||

| + | ;Multi-Processing : These fast context switches, that require no cache and page translation flushes in a single address space, and fast optimistic synchronization facilities makes managing many diverse processes and workloads on one machine far more effective and efficient. | ||

| + | |||

| + | To briefly classify the Mill architecture: it is a [[Static Scheduling|statically scheduled]], wide issue, in order [[Belt]] architecture, i.e. in a [http://en.wikipedia.org/wiki/Digital_signal_processor DSP] all instructions are issued in the order they are present in the binary instruction stream. | ||

| + | |||

| + | This approach traditionally has problems dealing with common general purpose workload operations and flows like branches, particularly [[Pipelining#While_Loops|while-loop execution]], as well as with hiding [[Memory]] access latency. Those problems have been addressed; the [[Static Scheduling]] by the [[Compiler]] offloads most of the work that had to be done in hardware on every cycle into once at compile time tasks. This is where most power savings and performance gains come from in comparison to traditional general purpose architectures. | ||

<br /> | <br /> | ||

| Line 35: | Line 44: | ||

== Overview == | == Overview == | ||

| − | This could be described as the general design philosophy behind the Mill: Remove anything that doesn't directly contribute to computation at runtime from the chip as much as possible, perform those tasks once and optimally in the compiler and use the freed space for more computation units. This results in vastly improved single core performance through more instruction level parallelism as well as more room for more cores. | + | This could be described as the general design philosophy behind the Mill: Remove anything that doesn't directly contribute to computation at runtime from the chip as much as possible, perform those tasks once and optimally in the compiler, and use the freed space for more computation units. This results in vastly improved single core performance through more instruction level parallelism as well as more room for more cores. |

There are quite a few hurdles for traditional architectures to actually utilize the large amount of instruction level parallelism provided by many <abbr title="Arithmetic Logic Unit">ALU</abbr>s. Some of the most unique and innovative features of the Mill emerged from tackling those hurdles and bottlenecks. | There are quite a few hurdles for traditional architectures to actually utilize the large amount of instruction level parallelism provided by many <abbr title="Arithmetic Logic Unit">ALU</abbr>s. Some of the most unique and innovative features of the Mill emerged from tackling those hurdles and bottlenecks. | ||

| − | * The [[Belt]] for example is the result of having to provide many data sources and drains for all those computational units, interconnecting them without tripping over data dependencies and hazards and without having polynomial growth in the cost of space and power for interconnecting. | + | * The [[Belt]], for example, is the result of having to provide many data sources and drains for all those computational units, interconnecting them without tripping over data dependencies and hazards and without having polynomial growth in the cost of space and power for interconnecting. |

| − | * The unusual split stream, variable length, very wide issue [[Encoding]] makes the parallel feeding of all those ALUs with instructions possible | + | * The unusual split stream, variable length, very wide issue [[Encoding]] makes the parallel feeding of all those ALUs with instructions possible in a die space and energy efficient way with optimally computed code density. |

| − | * The Mill features an exposed latency pipeline | + | * The Mill features an exposed latency pipeline. As per usual with static scheduling, the latencies of all operations are fixed and known to the compiler for optimal scheduling. There are no variable latency instructions, except [[Instruction_Set/load|load]]. And while for load the latency may be different for each specific use, it is explicitly defined for each specific use. This makes it possible to statically schedule loads as well and hide almost all load latencies. |

| − | * [[Metadata]] encapsulates normally global program state in operands, eliminating side effects, exposing far more instruction level parallelism, | + | * [[Metadata]] encapsulates normally global program state in operands, eliminating side effects, exposing far more instruction level parallelism, simplifying the instruction set, and increasing code density. |

| − | * This heavily aided static scheduling furthermore enables the extensive use of techniques like [[Phasing]], [[Pipelining]], [[Speculation]], branch [[Prediction]] over several jumps with prefetch and a very short pipeline | + | * This heavily aided static scheduling furthermore enables the extensive use of techniques like [[Phasing]], [[Pipelining]], [[Speculation]], and branch [[Prediction]] over several jumps with prefetch and a very short pipeline, which minimizes the occurrence and impact of stalls for unhindered [[Execution]]. |

| − | * A new [[Memory]] access model with caches fully working on virtual addresses | + | * A new [[Memory]] access model with caches fully working on virtual addresses and [[Protection]] mechanisms uncoupled from address translation makes you never wait for address translation unless it is masked by DRAM access anyway. |

| − | * The Mill is a processor family with many different member | + | * The Mill is a processor family with many different member processor cores with very different hardware. It still provides a common binary program format that gets [[Specializer|specialized]] for every specific processor on install. Any operations that are specific to only some of the processors and might not be available in hardware on all members of the Mill family are emulated in software for full compatibility. |

* This is also true for any operations that cannot be implemented in hardware with fixed latencies, like divisions. They are realized in terms of the other real hardware operations by the compiler, which often is even better in performance than traditional microcode implementations of such instructions. | * This is also true for any operations that cannot be implemented in hardware with fixed latencies, like divisions. They are realized in terms of the other real hardware operations by the compiler, which often is even better in performance than traditional microcode implementations of such instructions. | ||

Latest revision as of 13:36, 14 December 2015

Introduction

The Mill architecture is a general purpose processor architecture paradigm in the sense that stack machine or a RISC processor is a processor architecture paradigm.

It is also a processor family architecture in the sense that x86 or ARM are processor family architectures. Any specific Mill processor can be configured and optimized to a wide variety of different tasks and use profiles.

Features

One guiding principle in the design was to look out for edge areas where current CPU designs struggle and specifially find solutions for those areas.

- Memory Bandwidth

- Innovations like Backless Memory and Merging Caches dramatically cut down memory usage in typical work loads.

- Computing Throughput

- The massive parallelism enabled by Static Scheduling and the flexible wide issue Encoding pushes MIMD workloads beyond anything possible previously.

- Security

- Rethinking the protection primitives to enable fast Context Switches, make true micro kernels viable for example, and hiding security sensitive control data from program access makes many common exploits physically impossible on the Mill architecture.

- Multi-Processing

- These fast context switches, that require no cache and page translation flushes in a single address space, and fast optimistic synchronization facilities makes managing many diverse processes and workloads on one machine far more effective and efficient.

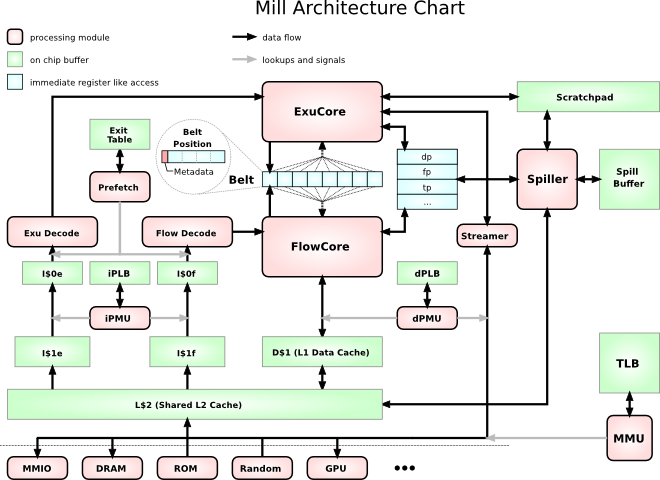

To briefly classify the Mill architecture: it is a statically scheduled, wide issue, in order Belt architecture, i.e. in a DSP all instructions are issued in the order they are present in the binary instruction stream.

This approach traditionally has problems dealing with common general purpose workload operations and flows like branches, particularly while-loop execution, as well as with hiding Memory access latency. Those problems have been addressed; the Static Scheduling by the Compiler offloads most of the work that had to be done in hardware on every cycle into once at compile time tasks. This is where most power savings and performance gains come from in comparison to traditional general purpose architectures.

Overview

This could be described as the general design philosophy behind the Mill: Remove anything that doesn't directly contribute to computation at runtime from the chip as much as possible, perform those tasks once and optimally in the compiler, and use the freed space for more computation units. This results in vastly improved single core performance through more instruction level parallelism as well as more room for more cores.

There are quite a few hurdles for traditional architectures to actually utilize the large amount of instruction level parallelism provided by many ALUs. Some of the most unique and innovative features of the Mill emerged from tackling those hurdles and bottlenecks.

- The Belt, for example, is the result of having to provide many data sources and drains for all those computational units, interconnecting them without tripping over data dependencies and hazards and without having polynomial growth in the cost of space and power for interconnecting.

- The unusual split stream, variable length, very wide issue Encoding makes the parallel feeding of all those ALUs with instructions possible in a die space and energy efficient way with optimally computed code density.

- The Mill features an exposed latency pipeline. As per usual with static scheduling, the latencies of all operations are fixed and known to the compiler for optimal scheduling. There are no variable latency instructions, except load. And while for load the latency may be different for each specific use, it is explicitly defined for each specific use. This makes it possible to statically schedule loads as well and hide almost all load latencies.

- Metadata encapsulates normally global program state in operands, eliminating side effects, exposing far more instruction level parallelism, simplifying the instruction set, and increasing code density.

- This heavily aided static scheduling furthermore enables the extensive use of techniques like Phasing, Pipelining, Speculation, and branch Prediction over several jumps with prefetch and a very short pipeline, which minimizes the occurrence and impact of stalls for unhindered Execution.

- A new Memory access model with caches fully working on virtual addresses and Protection mechanisms uncoupled from address translation makes you never wait for address translation unless it is masked by DRAM access anyway.

- The Mill is a processor family with many different member processor cores with very different hardware. It still provides a common binary program format that gets specialized for every specific processor on install. Any operations that are specific to only some of the processors and might not be available in hardware on all members of the Mill family are emulated in software for full compatibility.

- This is also true for any operations that cannot be implemented in hardware with fixed latencies, like divisions. They are realized in terms of the other real hardware operations by the compiler, which often is even better in performance than traditional microcode implementations of such instructions.

Implementation

The Belt is actually implemented as a big Crossbar that connects the functional unit Slots to the sources. How exactly this Crossbar is implemented is up to the specific processor. Different implementation options work better for different scales.