Memory

A lot of the power and performance gains of the Mill, but also many of its security improvements over conventional architecures come from the various facilities of the memory management. Most subsystems have their own dedicated pages. This page is an overview.

Contents

Overview

The Mill architecture is a 64bit architecture, there are no 32bit Mills. For this reason it is possible and indeed prudent to adopt a single address space (SAS) memory model. All threads and processes share the same address space. Any address points to the same location for every process. To do this securely and efficiently the memory access protection and address translation have been split into two separate modules, whereas on conventional architectures those two tasks are conflated into one.

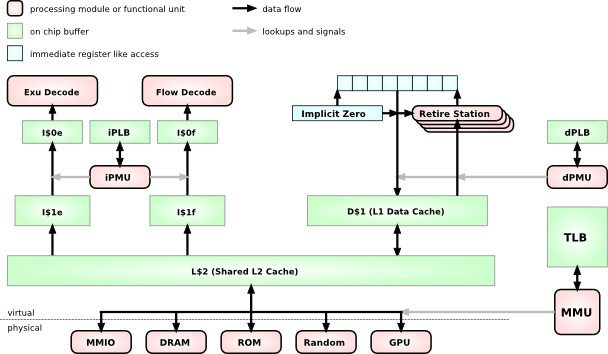

As can be seen from this rough system chart, There is a combined L2 cache, although some low level implementations may choose to omit this for space and energy reasons. The Mill has facilities that make an L2 cache less critical.

L1 caches are separate for instructions and data already, and even more, they are already separate for ExuCore instrucions and FlowCore instructions. Smaller, more specialized caches can be made faster and more efficient in many regards, but chiefly via shorter signal paths.

The D$1 data cache feeds into the retire stations with load operations and recieves the values from the store operations.

Protection

All Protection happens by defining protection attributes on virtual address regions. This happens above the Level 1 caches and separately for instructions and data with different attributes, execute and portal for instructions, read and write for data. The iPLB and dPLB lookup tables are specialized and can be small and fast. And even better optimizations exist in the well known regions for the most common cases. More on this under Protection.

Address Translation

Because address translation is separated from access protection, and because all processes share one address space, the translation and TLB accesses can be moved below the caches. In fact the TLB only ever needs to be accessed when there is a cache miss or evict. In that case there is a +300 cycle stall anyway, which means the TLB can be big and flat and slow and energy efficient. The few extra cycles for a TLB lookup are largely masked by the system memory access.

On conventional machines the TLB is right in the critical path between the top level cache and the functional units. This means the TLB must be small and with a complex hierarchy and fast and power hungry. And you still spend up to 20-30% of your cycles and power budget on TLB stalls and TLB hierarchy shuffling.

Reserved Address Space

The virtual address space is 60bit. This is because the top 4 bits of the Virtual Addresses are reserved for system use like garbage collection.

The top part of this 60bit address space is reserved to facilitate fast protection domain or turf switches with secure stacks. More on this there.

Retire Stations

Retire stations server the load/store FUs or Slot for the load operation. They implement the deferred load operation and conceptually are part of the FlowCore. The load operation is explicitly deferred, i.e. it has a parameter which determines exactly at which point in the future it has to make the value available and drop it on the Belt. This explicit static but parametrized scheduling allows the hiding of almost all cache latencies in memory access. A DRAM stall will still have the same cost, but due to innovations in cache access and Prefetch the amount of DRAM accesses has been vastly reduced, too.

Another important aspect of this deferred load operation is, that it will not load the value at the point of the issueing of the load operation, but at the point of when it is scheduled to yield the value. This makes the load hardware immune to Aliasing, which means the compiler can stop worrying about aliasing completely and aggressively optimize.

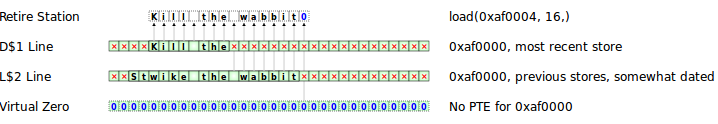

This is achieved by the active retire stations, i.e. the retire stations that have a load pending to return, monitor the store wires for stores on their address. And whenever they see there is a store on their address they just copy the value for later return.

Implicit Zero and Virtual Zero

Loads from uninitialized but accessible memory always yield zero on the Mill. There are two mechanisms to ensure that.

The first is virtual zero. When a load misses the caches and also misses the TLB with no PTE in it, it means there have been no stores to the address yet, and in this case the MMU returns zero for the load to bring back to the retire station. The big gain for this is that the OS doesn't have to explicitly zero out new pages, which would be a lot of bandwidth and time, and accesses to uninitialized memory only take the time of the cache and TLB lookups instead of having to do memory round trips.

This also has security benefits, since no one can snoop on memory garbage piles.

An optimization of this for data stacks is the implicit zero. The problems of uninitialized memory and of bandwidth waste that the virtual zero addresses for general memory accesses are even more compunded for the data stack, because of the high frequency of new accesses and because of the frequency with which recently written data is never used again. On conventional architectures this causes a staggering amount of cache thrashing and superfluous memory accesses.

The stackf instruction allocates a new stack frame, i.e. a number of new cache lines, but it does so just by putting markers for those cache lines into the implicit zero registers.

When a subsequent load happens on a newly allocated stack frame, the hardware knows it is a stack access due to the well known region and stack frame Registers. The hardware doesn't even need to check the dPLB or the top level caches, it just returns zero. So while virtual zero returns zero with only the cost of the cache accesses for uninitialized memory, for the most frequent case of uninitialized stack accesses you don't even have top level cache delays, but immediate access. And of course it also makes it impossible to snoop on old stack frames.

Only when a store happens on a new stack frame will an actual new cache line be allocated, the new value be written and the rest of the cache line be set to zero, all by hardware.

Data Cache

All data caches and shared caches have 9 bits per byte. The additional bit is the valid bit. Whenever a new cache line is allocated, always because of a store to a new location, the new value is set for the bytes of the store and their valid bits are set. All other bytes remain invalid.

Backless Memory

All stores are to the top of the data cache, and thus are neither write-back nor write-through. And, by definition, stores cannot miss the cache and don't involve memory. Cache lines can be evicted to lower levels. And when they are loaded again they are hoisted up again. When there are cache lines for the same address on multiple levels, they get merged on evict or hoist, with the upper level winning if both bytes are valid. In the above example, after the width 16 load, you would have the full merged string "StKill the wabbit\0" on the top level cache.

All this usually happens without any physical memory involvement, completely in cache. It is backless memory and a vast improvement in all access times. And because all this happens in cache with the valid bit mechanisms, there are also no alignment penalties for loads and stores of data types of different widths. The load and store operations only support power of two widths for the data, but they can be on addresses of any alignment without penalty.

If there are still invalid bytes left at the lowest cache level, and there is a PTE for the cache line, then of course the remaining bytes are taken from memory, and the line is hoisted from memory and merged. But for that to happen a line first has to be completely evicted to physical memory, and then new writes without intermediate loads have to have created new lines in cache for the addresses in the line.

As a result the cases where actual access to physical memory is necessary have been vastly reduced. And often the temporary data in smaller subroutines never gets into physical memory at all, the whole lifetime of the objects has been spent in cache.

Memory Allocation

Only when a lowest level cache line is evicted an actual memory page gets allocated. And even this happens completely in hardware with cache line size pages and a bit map allocator from a hierarchy with larger pages. Still invalid bits are set to 0 by the MMU.

It is those larger pages that are managed by the OS in traps raised by the MMU when it runs low on backing memory pages.

A big advantage of this allocation behavior is, that in the vast majority of cases you only get to write into memory when a larger number of writes has accumulated in cache already, and they are written all at once. In contrast to write-back caches, where this is also the case, you don't always need to evict a cache line on a read miss, because you can often just merge the memory backing into the invalid bytes of the existing cache line. Only a read from a truly cold and unpredicted and thus unprefetched line triggers an evict that causes a stall. A store into a cold line triggers an evict too, after it cascaded down the caches, but this evict almost never causes a stall, since the evicted line is most likely a cold line.

Instruction Cache

The instruction caches are of course read only. And as mentioned before, they are specialized to their Instruction stream. This means they are managed differently from the data caches, to facilitate better instruction Prefetch and Decode without bubbles in the pipeline. Prefetch also handles the prefetching of loads for the shared and data caches. More in this on the respective pages.

Sequential Consistency

All memory accesses happen in the order they occur in the program. This is sequential consistency. No access reordering happens, and consequently there is no need for memory fences and the like.

Loads and stores may be placed in the same instruction or retire in the same cycle, and as such are issued and executed in parallel. But the order they retire in is still determinded by the order they appear in the instruction, and as such by the order of the Slots they were issued into.

This order is not only maintained on a single core, but a defined order for all cores on a chip is maintained with the cache coherency protocol.

Spiller

The spiller is a dedicated central hardware module that preserves internal core state for as long as it may be needed. As such it may save internal core state to dedicated DRAM areas, the spiller space. This memory is not accessible by any other mechanism, and no other hardware mechanisms can interfere with the spiller and meddle with its own internal state. As such spiller memory accesses don't need to go through the Protection layer, since no one can make the spiller to do anything insecure. The spiller also doesn't go through the cache hierarchy. It still goes through address translation, but this is mainly because special system tools like Debuggers occasionally need to read spiller state, and because the OS needs to know where the spiller area is to not accidently allocate the spiller area for something else. If it wasn't for that, the spiller could directly write to and read from dedicated physical memory and no other system component could ever access it, because they would all have to go through the address translation, which would provide no entry for those physical pages.

The Spiller has its own more detailed page.

Streamer

Rationale

Memory latency is the main bottleneck that dictates how modern processors are designed. Memory latency is the reason why all the expensive out-of-order hardware is so prevalent on virtually all general purpose processors since the 60s. If anything the steadily increasing gap in frequency between memory and processor cores makes the latency even more felt today.

So hiding the latency of the memory accesses and reducing the amount of memory accesses are the primary goals in any processor architecture. Both is mainly achieved with the use of caches. Sophisticated Prediction and Prefetch fills the caches as far in advance as possible. Load and store deferring and Pipelining and Speculation hide the cache latencies, and increase the levels of IPL for loads and stores by making memory accesses less dependent on each other. And the cache management protocols determine the amount of actual memory accesses.

All three aspects have new solutions on the Mill. Generally those solutions are not really more powerful or faster than the solutions of conventional out-of-order architectures. They are only vastly cheaper. Truly random and unpredictable work loads still can't be helped though.

Media

Presentation on the Memory Hierarchy by Ivan Godard - Slides