Pipeline

The functional units, organized into a pipeline is addressed by a dedicated decoding hardware unit called a Slot, are the workhorses, where the computing and data manipulation happens.

Contents

Overview

A pipeline is a cluster of functional units, that contain circuits like multipliers, adders, shifters, or floating point operations, but also for calls and branches and loads and stores. This grouping generally is along the phases of the operations the functional units implement and it also depends on which Slot issues the operations to them.

All the FUs in a pipeline also share the same 2 input channels from the belt, at least for the phases that have inputs from the belt.

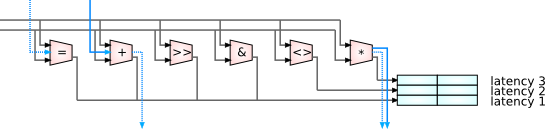

The different operations grouped into one pipeline may have different latencies, i.e. they take a different amount of cycles to complete. A each FU in the slot still can be issued one new operation every cycle, because they are fully pipelined.

To catch all the results that are issued in different cycles, but may retire in the same cycle, there are latency registers for each latency possible in the pipeline for the values to be written into. They are dedicated, so only that pipeline can write into them.

Operands and Results

This is to reduce the number of connecting circuits between all the functional units and registers. Those same latency registers are what on a higher level is visible as Belt locations. The trick here is that they are addressed differently for writing into them, for creating new values, and for reading them, when they serve as sources. As mentioned above, the registers can only be written by their dedicated pipeline, they are locally addressed. But they can serve as belt operand globally to all pipelines. In a similar way global addressing cycles through all the registers for reading, on a per pipeline basis the local addressing cycles through all the dedicated latency registers for writing. In contrast to the belt addressing this is completely hidden from the outside, it is purely machine internal state.

Result Replay

There is actually double the amount of latency registers for each pipeline than you would need just to accommodate the produced values from the functional units. This is because while an operation is in flight over several cycles, a call or interrupt can happen, and the frame changes. The operations executing in the new frame need places to store their results, but the operations still running for the old frame do as well.

Within a call of course more calls can happen, and then the latency registers aren't enough anymore. And there is other frame specific state too. This is where the Spiller comes in, it monitors frame transitions, and saves and restores all the frame specific state that might still be needed safely and transparently in the background. And a big part of that is saving the latency register values that would be overwritten in the new frames, and then restoring them on return.

This saving of results when the control flow is interrupted or suspended in some way is called result replay. This is in contrast to execution replay on conventional machines, that throw away all transient state in that case, and then restart all, potentially very expensive, computations again from the beginning.

Interaction between Pipelines

The main way pipelines interact with each other is by exchanging operands over the belt. The results of one operation onto the belt become the operands for the next.

But there is this 2 operand limitation for pipelines. And there are some common more complex operations that need more than 2 operands, or that have additional implicit operands on conventional architectures.

For such cases certain functional units in adjacent pipelines work together and bundle their input and compute resources and implement those operations cooperatively. This process is called Ganging

The coordination between the two pipelines happens via two channels. For one there are special 2 slot operations defined that the decoder issues to the corresponding pipelines. But there are also simple and fast data connections between neighboring pipelines. Those are the blue arrows in the diagram above. Through those side channels, when triggered via those special operations, additional input operands or transient results or just operation state predicates can be passed from one pipeline to another without going through all the Crossbar wiring, even within the same cycle within the same phase. But only for those specially defined operations.

Those data paths also can be used for the saving of result replay values in the latency registers of a neighboring slot in case the own latency registers are full, so the Spiller doesn't have to intervene unless it really needs to.

Kinds of Pipelines

Each slot on a Mill processor is specified to have its own capabilities by having a specific set of functional units their connected pipeline. This can go so far as every slot pipeline on each chip being unique, but usually this is not the case. The basic reader slots and load and store units tend to be quite uniform, as well as the call slots. The ALU pipelines are a little more diverse, depending on the expected workloads the processor is configured for, but still not wildly different. But the Mill architecture certainly is flexible enough to implement special purpose operations and functional units.

Consequently the different blocks in the instructions encode the operations for different kinds of slots. And if the Specification calls for it, every operation slot has its own unique operation encoding. All this is automatically tracked and generated by the Mill Synthesis software stack.

Media

Presentation on Execution by Ivan Godard - Slides

Presentation on the Belt and Pipelines by Ivan Godard - Slides